|

Vivekananda Swamy Mattam I'm a Master's student in Mechatronics and Robotics at NYU Tandon School of Engineering. Growing up in rural India, I saw firsthand the challenges of agriculture and inefficient processes. When I visited my grandfather's workplace at Mahindra and watched robots handle tasks that once required intense human labor, something clicked. That's when I realized robotics wasn't just about building cool machines but about creating systems that genuinely solve problems and reduce human burden. NYU has been an incredible learning experience. I've had the chance to work on a Bell Labs funded project distilling foundation models onto edge hardware for autonomous navigation, train quadruped robots with reinforcement learning in Isaac Lab, and work on visual SLAM and perception for the VIP Self-Drive project. Before coming here, I interned at Xmachines, an agricultural robotics startup, where I worked on sensor fusion and motion planning. Right now, I'm looking for internship/full-time opportunities in robotics, autonomous systems, or applied AI. I want to work on projects where the technology actually matters, where robots are solving real problems for real people. |

|

Experience |

|

Course Assistant - Autonomous Mobile Robots

NYU Tandon School of Engineering, Prof. Aliasghar Arab 2024 - Present Course Materials Sole CA for a graduate-level robotics course. Created lecture notes, slides, and diagrams from the professor's raw notes, and built the course website hosting all materials. Covers vehicle kinematics, nonlinear control, Lyapunov stability, feedback linearization, MPC, Control Barrier Functions, motion planning, and verification & validation. Adopted as permanent course infrastructure. "Thank you to Vivek Mattam for his work in preparing and organizing this course website. Your dedication and attention to detail have made this resource exceptional." - Prof. Arab |

|

Robotics Engineering Intern

Xmachines - Agricultural Robotics Startup 2024 Built a Flask server for real-time sensor streaming, integrating MPU-6050 IMU data and Arducam IMX219 camera feeds into a unified monitoring interface for debugging robot state during field tests. Developed an object detection tunnel for the weeder robot using computer vision to classify objects by size and route them into collection boxes, automating a previously manual sorting process. Also worked on ROS-based motion planning for navigation in dynamic farm environments, with embedded systems including Jetson Orin Nano, Arduino, and ESP32. Tech: ROS, Python, Flask, Embedded Systems, Jetson Orin Nano |

ProjectsI'm interested in autonomous navigation, visual SLAM, robot perception, and deploying learning-based systems on real robots. |

|

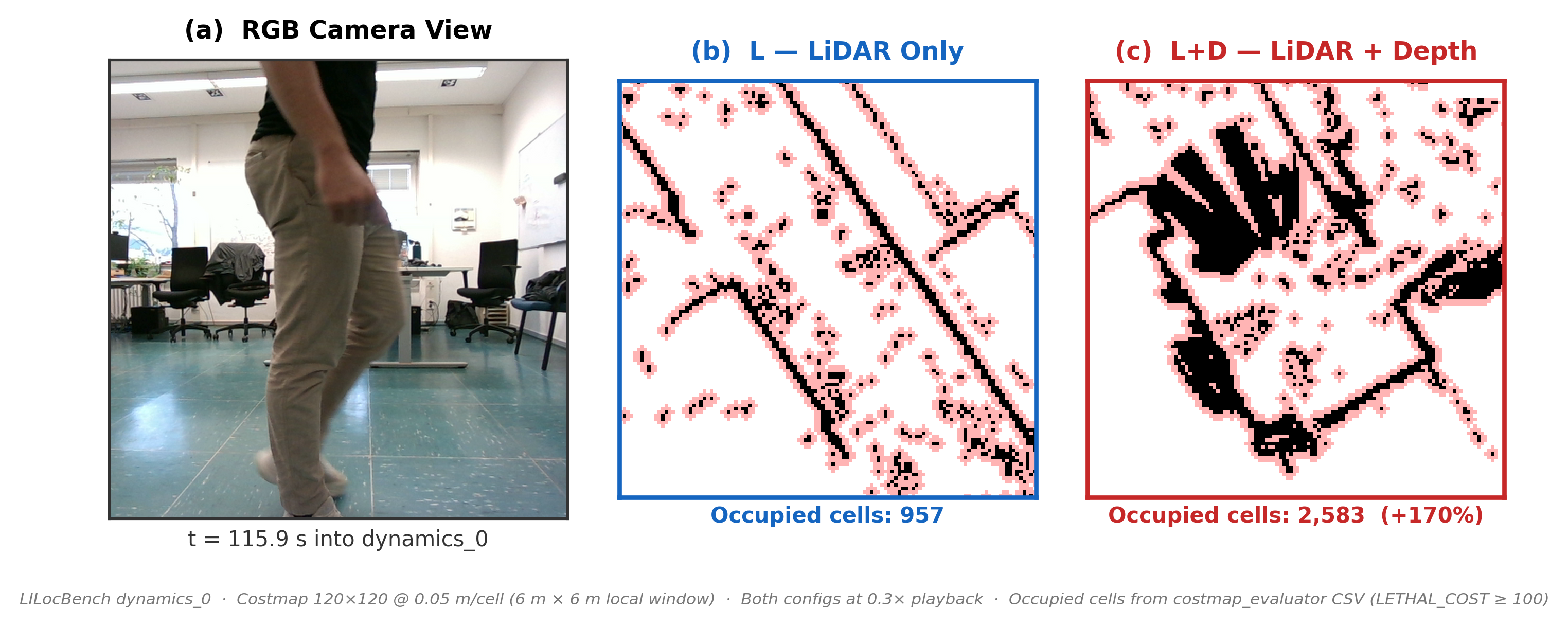

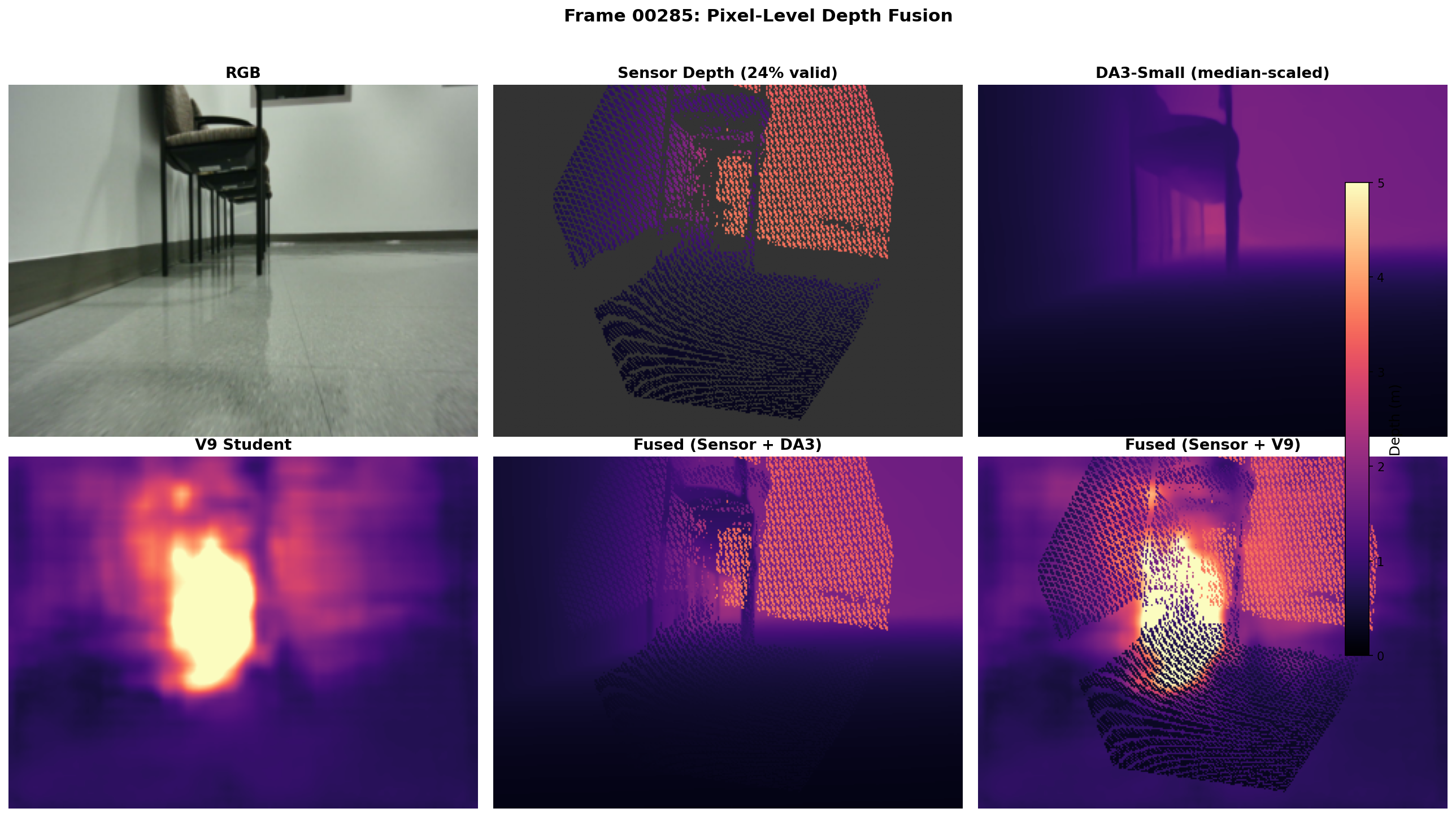

Vortex: Autonomous RC Car with Edge-Deployed Distilled Perception

NYU, Bell Labs Funded (MS Project), Jan 2025-Present Built a 1/10-scale autonomous robot from scratch: Traxxas Maxx 4S chassis, VESC MINI v6 (FOC, 60A) motor controller, Teensy 4.1 handling dual AS5600 magnetic encoder odometry and servo control over a custom serial protocol, with ELRS RC for teleoperation. Sensors include an RPLiDAR S2 (18m range) and Orbbec Femto Bolt (RGB + ToF depth + 200Hz IMU), all running on a Jetson Orin Nano 8GB. The perception problem: the ToF camera returns 77% invalid pixels on polished floors and glass, leaving the Nav2 costmap blind to obstacles the 2D LiDAR scan plane misses entirely. I built a selective depth-failure recovery system that detects invalid pixels and substitutes learned monocular depth only where the sensor fails, keeping LiDAR as the primary SLAM anchor. The learned depth adds 55% more obstacle evidence in a live reflective corridor and 170% more occupied costmap cells on the LILocBench dynamic benchmark. In closed-loop Gazebo simulation, a corridor-specialized student achieves 9/10 navigation success with zero collisions, matching the ground-truth depth baseline. The model is an EfficientViT-B1 student (5.31M params) distilled from Depth Anything v3 + SAM2 teachers for joint depth + segmentation, trained on NYU HPC (L40S GPUs). Depth scale is recovered per-frame via median alignment against valid ToF pixels. The model runs at 21.9 FPS on the Jetson via TensorRT FP16. The full ROS 2 stack was built from scratch: custom nodes for VESC motor control (reverse-engineered UART protocol for firmware 6.06), encoder odometry, TensorRT inference, depth fusion, and class-aware costmap generation. Navigation uses Nav2 with MPPI controller, AMCL localization, and SLAM Toolbox, with a 7-config costmap ablation study across two evaluation environments. Tech: ROS 2 Humble, Nav2, PyTorch, TensorRT, ONNX, Gazebo, Python, C++ |

|

Reinforcement Learning for Quadruped Locomotion

NYU, 2024 Trained a Unitree Go2 robot to walk using PPO in NVIDIA Isaac Lab. Implemented reward shaping for smooth actions, gait coordination (Raibert heuristic), and body stability. Added an actuator friction model with domain randomization for sim-to-real transfer. The final policy tracks velocity commands at nearly 2x the baseline targets on both flat and rough terrain. Tools: Isaac Lab, PyTorch, PPO, NYU HPC | GitHub |

|

Autonomous Person Following on Boston Dynamics Spot

NYU, 2025 Built a real-time person-following system for Boston Dynamics Spot using visual servoing. The robot detects people with YOLOv8, computes lateral, distance, and pitch errors from the bounding box, and generates smooth velocity commands at 10Hz. Body pitch control enables tracking people on stairs and slopes. Currently exploring navigation world models to evolve from reactive control to predictive navigation, implementing ViNT (Visual Navigation Transformer) and GNM (General Navigation Models) for goal-conditioned navigation and cross-robot transfer learning. Tech: Python, PyTorch, YOLOv8, OpenCV, ZED SDK, Spot SDK, Docker, CUDA |

|

|

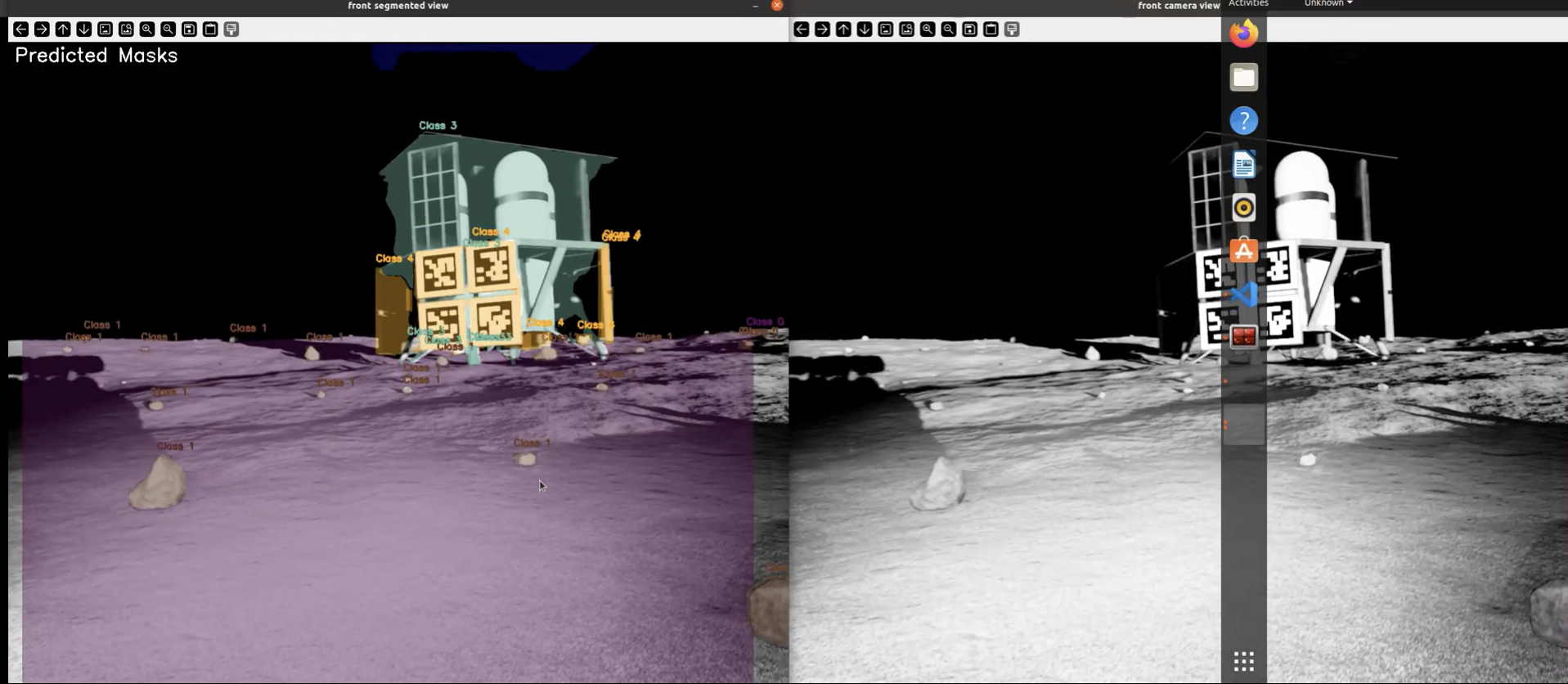

Lunar Autonomy Challenge

NASA-style Lunar Autonomy Challenge, 2024-Present Building an autonomous navigation stack for a simulated lunar rover exploring unknown terrain without GPS or prior maps. The system integrates ORB-SLAM3 pose estimation with stereo depth, voxel and height mapping, perception-aware costmaps, A* planning, and pure-pursuit + PID control. What makes it different: depth is treated as uncertain, not ground truth. Stereo uncertainty is carried through into mapping and planning, so the rover penalizes and actively avoids low-confidence areas -- shadows, crater rims, and texture-poor regions. For stereo, SGBM is the default working backend, with FoundationStereo integrated as a configurable path when a model checkpoint is provided. Tech: Python, C++, ORB-SLAM3, FoundationStereo, SGBM, OpenCV, ROS 2, PyTorch |

|

CityWalker-EarthRover Integration

NYU, AI4CE Lab, 2024-Present Deployed CityWalker (CVPR 2025) on a FrodoBots EarthRover for sidewalk navigation. The model was trained on YouTube walking videos and had never seen this robot, so the whole deployment is zero-shot. Built the full pipeline from camera input to differential drive motor commands: preprocessing, waypoint prediction, coordinate transforms, and trajectory tracking. Also extended CityWalker with Depth Barrier Regularization (DBR) -- penalizes waypoints directed toward obstacles within a 0.5m safety margin using Depth-Anything-V2 at training time, depth-free at inference. Offline results on CityWalk show a 15% reduction in depth violations and 23% increase in minimum depth margin while keeping navigation accuracy within 3% of baseline. |

|

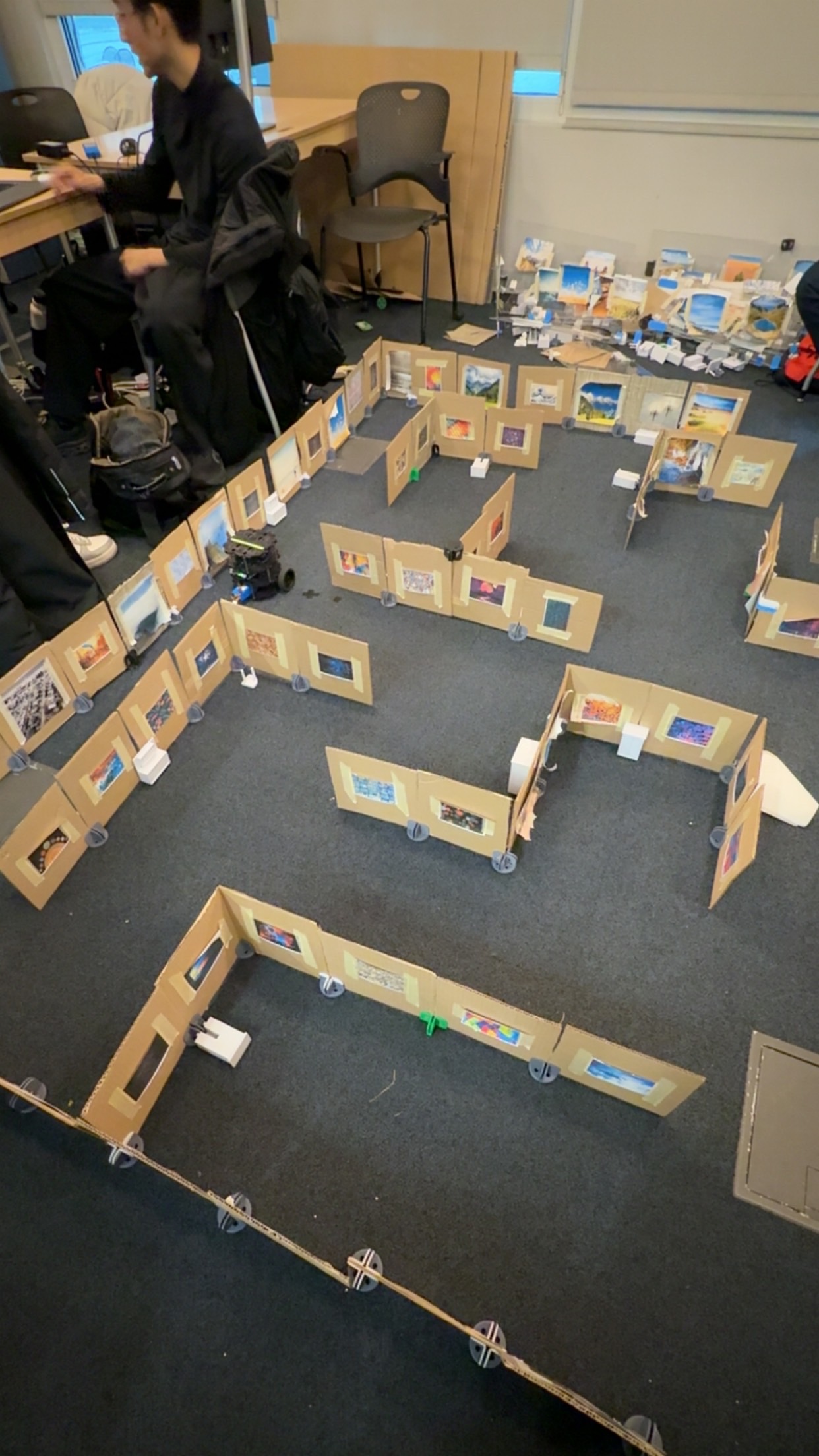

VIP Self-Drive — Student Leader

NYU Vertically Integrated Projects, AI4CE Lab, Faculty: Prof. Chen Feng, Sep 2024-Present Currently leading the VIP Self-Drive team. Integrating vision-based navigation models (MBRA, CityWalker) with FrodoBots EarthRover for deployment and evaluation. Also leading NYU's hosting of Earth Rover Challenge 3, including implementation of the NYU tracks. Previously built camera-only TurtleBot3 navigation using ORB visual SLAM and CosPlace + SuperGlue (79% inlier ratio); perception work includes RANSAC plane fitting, ICP alignment, and YOLOv11+ByteTrack tracking. Tech: ROS 2 Humble, PyTorch, CosPlace, SuperGlue, OpenCV, YOLOv11, ByteTrack, ORB SLAM, TurtleBot3, FrodoBots EarthRover |

|

HSRN Robot - Data Center Automation

NYU, 2024-Present Multi-robot coordination for data center tasks using ROS, Corelink, and sensor fusion. |

|

|

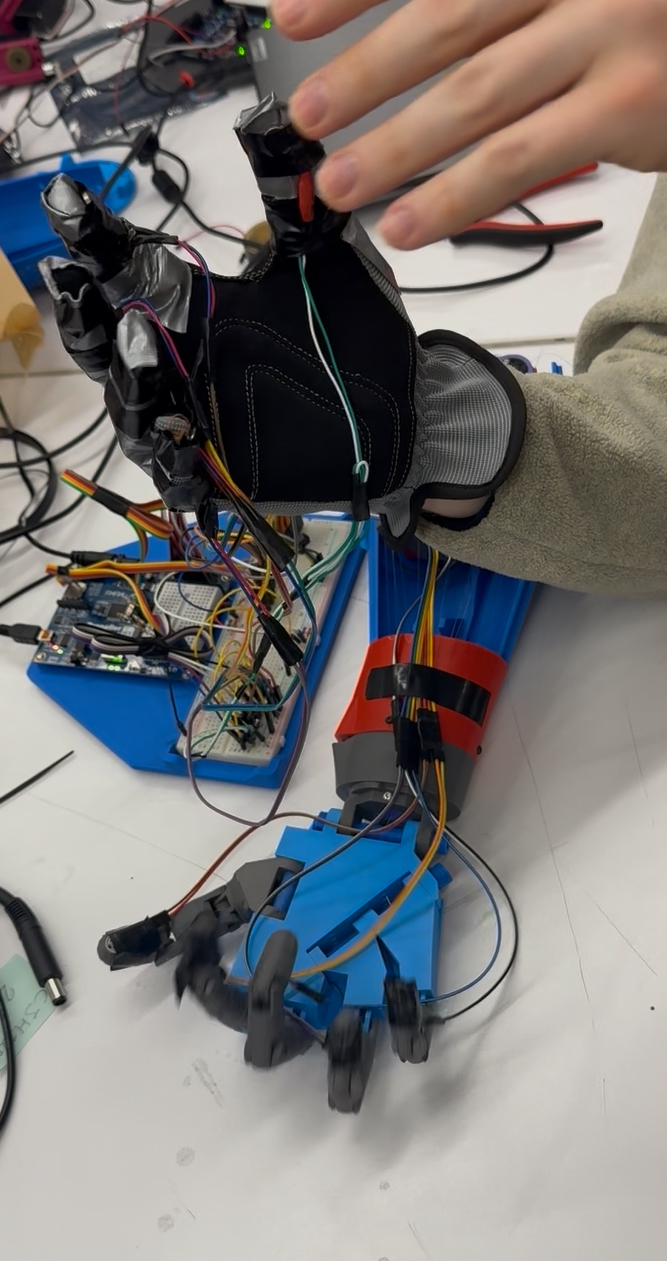

The S.L.A.P. Hand - Gesture-Controlled Robotic Hand

Undergraduate Major Project S.L.A.P. (Simultaneous Linked Articulation Project) started as my undergraduate project and evolved over time. Began with Arduino and flex sensors for finger tracking, then moved to Propeller microcontrollers for better multi-servo control, and eventually Raspberry Pi for more computational power. The biggest shift was moving from flex sensors to vision-based control using Google's Mediapipe for hand tracking. The system now includes haptic feedback for tactile sensing. Tech: Raspberry Pi, Arduino, Propeller, Google Mediapipe, MPU6050, Haptic Feedback |

|

B.A.R.K. Door - IoT Pet Access System

Personal Project B.A.R.K. (Bluetooth Actuated Remote Key) Door is a smart pet door using RFID tags to recognize authorized pets and Bluetooth for manual control. Built with a BS2 microcontroller and servo mechanisms for the locking system. Tech: BS2, RFID, Bluetooth, IoT, Servo Mechanisms |

|

E.S.V.C. - Solar-Powered Electric Vehicle

Electric Solar Vehicle Championship, Team Solarians 4.0 Designed the chassis for our entry in the Electric Solar Vehicle Championship (competing against 60+ teams). Used CATIA V5 for CAD and ANSYS R16.2 for structural analysis, optimizing an AISI 4130 steel tubular frame for lightweight design while meeting safety requirements. Tech: CATIA V5, ANSYS R16.2 |

Technical Skills

Languages: Python, C++, MATLAB |

Education

M.S. Mechatronics and Robotics

B.Tech. Mechanical Engineering |

|

Template from Jon Barron. Last updated: January 2025. |